World Economic Forum Wants To Use AI To Automatically Censor Speech On The Internet

The World Economic Forum (WEF) proposed a new way of censoring online content that requires a small group of experts to train artificial intelligence on identifying “misinformation” and abusive content.

The WEF published an article Wednesday outlining a plan to overcome frequent instances of “child abuse, extremism, disinformation, hate speech and fraud” online, which the organization said cannot be handled by human “trust and safety teams,” according to ActiveFence Trusty & Safety Vice President Inbal Goldberger, who authored the article. Instead, the WEF proposed an AI-driven method of moderating online content, where subject matter experts provide training sets to the AI so it can learn to recognize and flag or restrict content that human moderators would deem dangerous.

The system works through “human-curated, multi-language, off-platform intelligence,” input provided from expert sources, to create “learning sets” for the AI machine.

“Supplementing this smarter automated detection with human expertise to review edge cases and identify false positives and negatives and then feeding those findings back into training sets will allow us to create AI with human intelligence baked in,” Goldberger stated.

In other words, trust and safety teams can help the AI with anomalous cases, allowing it to detect nuances in content that a purely automated system might otherwise miss or misinterpret, according to Goldberger.

“A human moderator who is an expert in European white supremacy won’t necessarily be able to recognize harmful content in India or misinformation narratives in Kenya,” she explained. As time goes on and the AI practices with more learning sets, it begins to identify the kinds of content moderating teams would find offensive, reaching “near-perfect detection” at a massive scale,

Goldberger said the system would protect against “increasingly advanced actors misusing platforms in unique ways.”

Trust and safety teams at online media platforms, such as Facebook and Twitter, bring a “nuanced comprehension of disinformation campaigns” that they apply to content moderation, said Goldberger.

That includes working with government organizations to filter content communicating a narrative about COVID-19, for example. The Centers for Disease Control and Prevention advised Big Tech companies on what types of content to label as misinformation on their sites.

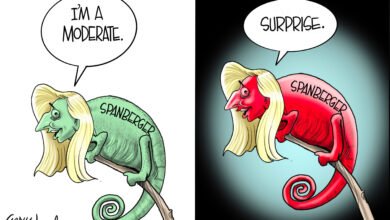

Social media companies have also targeted conservative content, including posts that negatively portray abortion and transgender activism, or contradict the mainstream understanding of climate change, by either labeling them as “misinformation” or blocking them entirely.

The WEF document did not specify how members of the AI training team would be decided, how they would be held accountable or whether countries could exercise controls over the AI.

Congress May Have Missed Its Chance To Break Up Big Tech https://t.co/303Eiwrida

— Daily Caller (@DailyCaller) August 9, 2022

Elite business executives who participate in WEF gatherings have a track record of proposals that expand corporate control over peoples’ lives. At the latest WEF annual summit, in March, the head of the Chinese multinational technology company Alibaba Group boasted of a system for monitoring individual carbon footprints derived from eating, travel and similar behaviors.

“The future is built by us, by a powerful community such as you here in this room,” WEF founder and chairman Klaus Schwab told an audience of more than 2,500 global business and political elites.

The WEF did not immediately respond to the Daily Caller News Foundation’s request for comment.

Content created by The Daily Caller News Foundation is available without charge to any eligible news publisher that can provide a large audience. For licensing opportunities of our original content, please contact licensing@dailycallernewsfoundation.org

Believe it or not most of us know, we are censored, we are becoming a communist country. Thanks to Biden, Obama,and all the gang.m